Recent years have seen exciting innovations in AI-driven technology in healthcare, and the field of diagnostics is no exception. With big data becoming more readily available, there has been a rise in the number of algorithms that can be trained on very large data sets. In radiology, AI-powered tools can analyse images such as x-rays and MRIs to detect abnormalities, analyse disease patterns and make predictions. The insights made possible by AI can help to tailor treatments and in some cases lead to earlier diagnosis.

There are exciting examples of innovations revolutionising the space. Take Viz.ai, which helps stroke patients access prompt treatment through AI algorithms. By accessing medical imaging data from hospitals, its technology analyses medical images and identifies potential abnormalities. It also facilitates care coordination by connecting care teams through a platform where they can communicate and collaborate. Another example is the collaboration between the Massachusetts Institute of Technology and Harvard. They’re using AI and machine learning (ML) for drug discovery and development with the ambitious aim of curing all diseases. Yet overall, the healthcare space is cautious when it comes to adopting technological innovation. Unlike other industries – like retail or fintech – progress has been relatively slow.

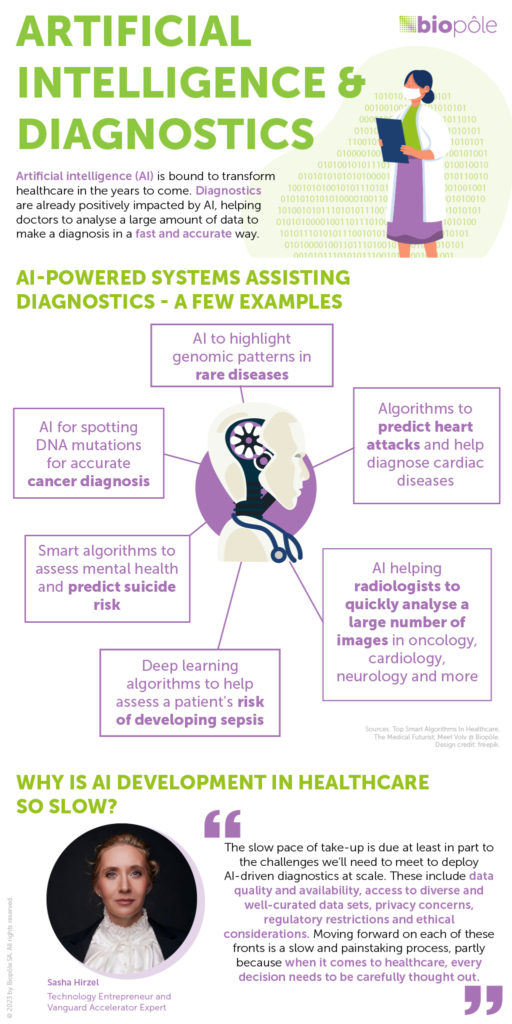

Why is this? The slow pace of take-up is due at least in part to the challenges we’ll need to meet to deploy AI-driven diagnostics at scale. These include data quality and availability, access to diverse and well-curated data sets, privacy concerns, regulatory restrictions and ethical considerations. Moving forward on each of these fronts is a slow and painstaking process, partly because when it comes to healthcare, every decision needs to be carefully thought out.

One of the critical challenges we face is AI models’ susceptibility to bias, which can result in inaccurate diagnoses. For instance, in the field of radiology for detecting skin cancer, an algorithm trained solely on data from individuals with white skin may yield incorrect diagnoses for patients with different skin colours. The consequences of such bias can be devastating for patients.

Recognising the need for diversity in AI goes beyond ethnicity, age and gender – it encompasses a wide range of socioeconomic and other factors, such as differences in demographics, access to healthcare and cultural factors. If the training data predominantly consists of patients from certain geographical areas, the AI model may not generalise well to populations with different characteristics or healthcare challenges. This can result in biased predictions or recommendations when the AI model is deployed in diverse settings.

Researchers and developers are increasingly recognising the importance of diversity and fairness in AI development, but there is still work to do. To mitigate bias, it is crucial to ensure that training data sets are diverse and representative of the target population. This includes actively seeking data from underrepresented regions and populations, considering different geographical locations, socioeconomic backgrounds and cultural contexts. Additionally, ongoing evaluation and monitoring of the AI models in real-world settings can help identify and address any biases that may emerge.

Yet it will not suffice to make progress on the entrepreneurial and clinical fronts; regulatory changes are also essential. I applaud the United States’ 2023 omnibus spending bill, which requires clinical trials to be more diverse, and I would love to see similar laws adopted globally. Legislation will be a key aspect of moving forward with AI innovations. Healthcare is already a heavily regulated industry, and yet we need to go further. Regulation becomes all the more important as we progress to using advanced AI. Earlier this year, an open letter was signed by over 1,000 AI experts calling for a pause in the creation of AI ‘digital minds’ for at least six months because of the threat to humanity caused by the race to produce AI systems. In healthcare more than in any other field, regulation is crucial. The lives of patients depend on it – and all of us are patients at some point in our lives.

Today, there is tremendous pressure on overworked clinicians to deliver good care to patients. AI can help lighten their workload and lead to better patient outcomes, so it makes complete sense to use it. I’m very passionate about fixing healthcare and strongly believe AI is part of the solution. I recently listened to an interview with the CEO of Boston Dynamics, Marc Raibert, whose company makes the most advanced robots in the world. He said that scepticism is necessary because that’s how we create robust, reliable systems. As human beings, I believe we’re designed to be sceptical – to a certain extent – of anything that’s unknown. So if there were one motto I would like to see applied to AI in healthcare, it would be this: proceed with caution, but do proceed.